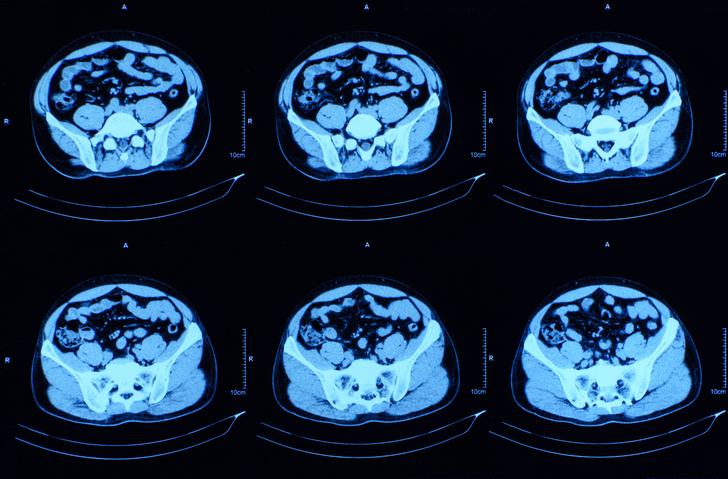

As a computed tomography (CT) scanner boots up, a patient lies still beneath a lead-mixed rubber apron. The radiologist moves the scanner into position, slips behind a wall, and captures a CT image. Almost instantaneously, the scan materializes digitally, but what follows is slower analytical work: a radiologist scrolling through layers of scans, comparing them to prior scans and studies, dictating findings into a microphone, and crafting a report in which every word carries clinical weight.

This imaging procedure is performed annually over 85 million times in the United States and approximately 300 million times worldwide—a massive number that is increasing year over year. The number of patients requiring CT scans is rapidly outpacing the number of radiologists, and the radiologist’s workload grows by the day, with radiologists across the U.S. reporting mounting workloads. Imaging volumes are estimated to be increasing by about four percent per year, driven by better technology, expanding indications, and declining costs. The radiology workforce, by contrast, is growing at roughly 0.4% annually, a 10x difference that’s exponentially growing.

The problem of limited resources, not just in radiology, drew a trio of young Stanford researchers—Louis Blankemeier, PhD, Zhihong Chen, PhD, and Akshay Chaudhari, PhD—who founded healthcare AI company Cognita Imaging in 2024. “It’s a crisis,” Blankemeier told Inside Precision Medicine. “If you talk to any radiologist, they’ll tell you that it’s totally underwater. Their caseloads are increasing every day. Radiologists are having to just write off contracts that they have because they just can’t keep up. The workload is so bad, radiologists are retiring. Radiology is maybe the most extreme example of this shortage that’s happening. If we don’t find a solution to this, the field will collapse.”

This week, Cognita announced two milestones that could fundamentally change that process and reduce the radiologist’s workload. On Wednesday, Cognita released a new paper in Nature unveiling a vision-language model (VLM) named Merlin for abdominal CT. Today, Cognita announced that it received an FDA Breakthrough Device designation for its Cognita Chest X-Ray (CXR) across multiple critical indications report generation tool. The industry-first generative VLM supports radiologists in interpreting chest X-rays and is the first radiology generative AI model—and one of few radiology AI solutions—to earn this designation.

Radiology Partners, the largest U.S. radiology practice, acquired Cognita in November 2025 through Mosaic Clinical Technologies, its technology and AI services division. With that acquisition and this week’s announcements, Cognita is leading a new generation of medical AI systems that interpret entire imaging studies and generate draft radiology reports rather than just flagging an abnormality.

From narrow AI to “self-driving” radiology

The seeming ease of “getting a scan” belies the intricacy involved in the background. Up to a billion pixels of data, spanning various phases and angles, can be contained in a modern CT study. Imaging studies done months or even years ago may need to be compared to the present scan by a radiologist. With thousands of potential diagnoses to consider, they need to assess minute variations in the density, shape, and texture of the tissues.

Radiologists rely on nearly a decade of training to interpret these scans, equipping them to handle common, everyday conditions as well as exceptional cases. For example, with approximately 10,000 rare diseases, if each appears in one out of every 10,000 patients, a radiologist may only see them once or twice in their career, but they are expected to recognize and synthesize these rare presentations accurately.

For each case, regardless of frequency, radiologists receive multiple CT scans of slightly different angles of the body that a patient gets in a single study. “It can include imaging from a prior study that a patient had, say, a year ago, and they will look through these studies, which can have up to a billion pixels of data,” Blankemeier said. “These studies often encode entire textbooks of medical information. Radiologists have to draw on almost a decade of training to understand what’s going on.”

After reviewing the images in each case, radiologists write long-form reports describing everything they see: the location of findings, their severity, size, uncertainty, and how they compare to previous studies. The reports can run multiple paragraphs, and precision is critical—a misplaced modifier or ambiguous phrase can alter clinical decisions downstream.

AI has been touted as a solution for radiology’s strain. Yet most commercial AI tools in imaging focus on detecting a single condition, such as a lung nodule, a brain bleed, or a fracture, and returning a binary yes-or-no output. Blankemeier describes those tools as analogous to “lane assist” in a car: helpful, but narrow in scope.

Cognita is attempting something much broader. Its system is a vision-language model (VLM) trained to map directly from the raw pixels of X-rays and CT scans to full, structured radiology reports. Instead of answering a single diagnostic question, the model generates a comprehensive narrative covering potentially tens of thousands of findings and modifiers. “It’s much more complex than people realize,” Blankemeier said. “You’re not just looking for one disease. You’re describing everything you see.”

The company’s roots trace back to its founders’ PhD research. At Stanford, Blankemeier, Chen, and Chaudhari wanted to apply emerging AI techniques to medicine. Radiology, unlike pathology, was already fully digitized. Imaging studies existed as digital files, making them theoretically amenable to machine learning. But early models were not ready for clinical deployment. At the time, VLMs performed well on tasks like image captioning or object detection, but radiology had particular obstacles: massive image sizes, 3D volumes, long and nuanced text outputs, and high-stakes decision-making.

“We decided to spend our PhDs trying to work on this problem, but the models were not ready yet for a company,” said Blankemeier. “We spent six years trying to refine the models and make them work in the real world. You can’t just use off-the-shelf models and fine-tune them on radiology images. With the billion pixels and the long reports, it’s very different than tasks that VLMs are excellent at. We had to build something customized and special here. Towards the end of our PhDs, the models were better. They still weren’t quite ready, but we thought that if we could put together all the pieces, like a huge data set, lots of compute, and the right team, we could create models that would be ready for the real world.”

Birth of a wizard

In coding or mathematics, AI systems can be trained using reinforcement learning because outputs can be objectively verified. Code either runs or it doesn’t. A math solution is either correct or incorrect. Radiology is different. A single “right” answer is uncommon. Reports contain nuance, uncertainty, and interpretation. Even human-generated reports contain occasional errors, especially under heavy workloads. “One of the big challenges is that radiologists are under so much pressure,” Blankemeier said. “They’re human. They make some mistakes.”

Training on imperfect data while aiming to surpass that baseline required new methods, which is where Cognita’s new paper on Merlin comes in. The Nature article focuses on abdomen-pelvis CT, which is one of radiology’s most complex modalities. Abdomen-pelvis CT creates 3D images from many slices and can show various issues, including tumors, blood vessel problems, inflammation, and unexpected findings.

To gather all this information and quickly create a radiology report, Blankemeier and his team created Merlin, a model that uses 3D images and language to understand CT scans by learning from regular hospital data without needing extra notes. Merlin was pretrained using more than 15,000 paired CT scans (over six million images) and 1.8 million electronic health record (EHR) diagnosis codes and six million words from radiology reports. By integrating structured clinical data with free-text reports, Merlin builds a broad understanding of anatomy and disease patterns.

The team evaluated Merlin across 752 tasks spanning diagnosis, prognosis, and image analysis. Even without task-specific fine-tuning, the model could classify radiologic findings, identify hundreds of clinical phenotypes, and retrieve relevant text from images. With adaptation, Merlin performed five-year disease prediction, automated report generation, and 3D organ segmentation, which involves dividing a three-dimensional image into distinct sections for analysis.

When tested inside the company and at several outside hospitals, Merlin either matched or did better than other specialized systems while still being efficient enough for health systems to train similar models without needing many resources. With the publication of Merlin, Cognita made its comprehensive imaging dataset public. According to Blankemeier, that’s significant because radiology data at this scale are rare, particularly those scrubbed of protected health information and cleared for public release.

“This data set that we have is so diverse that it covers almost every manufacturer and patient population in the U.S.,” said Blankemeier. “Because of that, our models are very robust to these differences. Our models have seen all these types of scanners during their training process. They learn to interpret images from all the scanners, and the model weights probably tell them the manufacturer just by looking at the images and pixels.”

A day following the publication of Merlin, Cognita announced it has received Breakthrough Device designation from the FDA for its chest X-ray report generation tool (not Merlin but a new AI tool built from what they learned on the Merlin project) across a set of critical findings considered potentially life-saving. The designation is intended to expedite development and regulatory review of medical technologies that may offer substantial improvement over existing standards of care. It does not constitute approval, but it enables more intensive interaction with the agency and potentially a faster path to clearance.

According to Blankemeier, the present is the first time a general-purpose VLM in radiology has received Breakthrough status across multiple findings rather than a single narrow indication. Broader clinical use will require formal FDA clearance or approval. “To the best of our knowledge, this is the first VLM that’s received FDA Breakthrough Device designation,” said Blankemeier. “This will just allow us to accelerate the FDA process to get approval eventually. Right now our models are being used under research IRBs, and the data that we’re seeing is super exciting.”

From meniscus to mental health

A central piece of Cognita’s strategy has been its partnership with Radiology Partners, the largest radiology practice in the world. The organization serves hospitals in all 50 U.S. states and, over more than a decade, has aggregated imaging data from across its nationwide network. Because the group employs the radiologists who interpret scans for these hospitals, it has built what Cognita describes as an unusually comprehensive archive of imaging studies and clinical reports. “It’s this amazing archive of data,” Blankemeier said. “They do about 16 million studies a year, so we essentially have this live feed of data coming in.”

The scale of that dataset proved critical for training Cognita’s models. With access to such a diverse stream of imaging data, the company says it has been able to build systems that perform competitively with human radiologists in many settings. Under research conditions, Cognita reports seeing three to four times fewer significant errors compared with human readers alone, though clinicians still oversee every case.

The partnership also shaped Cognita’s business strategy. Rather than raising a traditional Series A round, the company opted to integrate closely with Radiology Partners, allowing it to build what Blankemeier calls a “full-stack” radiology platform. Cognita trains the models, while the joint effort is also developing the underlying infrastructure, reporting tools, and viewing interfaces used by radiologists. That structure creates a continuous feedback loop: radiologists edit AI-generated draft reports directly within the system, and those edits can be used to improve the model over time. “There’s almost no example in healthcare or AI where your users edit your outputs at the word level,” Blankemeier said. “That feedback loop is incredibly powerful.”

Blankemeier said that Cognita’s roadmap extends beyond drafting reports. The first phase, now well underway, focuses on replicating and accelerating what radiologists already do—acting as a supervised “self-driving” system in which AI generates drafts and humans retain ultimate authority.

The second phase centers on what Blankemeier calls “opportunistic imaging.” Imaging studies contain more information than is typically reported. Subtle patterns in abdominal CT scans, for example, may correlate with cardiovascular risk, metabolic disease, or other systemic conditions. Extracting these latent biomarkers could enable earlier intervention and more personalized medicine. Blankemeier points to prior research suggesting that even mental health signals may be detectable in imaging data, highlighting how much information remains untapped.

The third phase would integrate imaging with additional modalities, including pathology, EHRs, laboratory values, and omics data. Fully digitized and information-rich, radiology serves as a foundation for building broader multimodal models. The company has shown that it can do well with X-rays and CT scans, and it is now moving into MRI, mammography, and PET-CT. Many of these modalities involve 3D data, presenting additional computational challenges but following a similar conceptual framework.

Looking further ahead, Blankemeier envisions a future in which individuals might undergo periodic whole-body MRI scans. By tracking changes longitudinally, AI could distinguish stable findings from evolving disease, potentially reducing false positives and boosting confidence in early detection. “A future that we really get excited about is one where everyone can get an MRI scan every six months and understand what’s going on in their bodies, and you can predict future things based on that imaging,” said Blankemeier. “That could be the future.”

For now, Cognita’s ambitions are grounded in a more immediate problem: stabilizing a field under mounting pressure. With a high-profile publication in Nature and an FDA Breakthrough designation, the company has signaled both scientific credibility and regulatory momentum. Whether its models can earn widespread clinical trust and reshape radiology’s daily workflow will depend on continued validation, oversight, and careful integration into practice. But as imaging volumes continue to climb and the workforce gap widens, the need for scalable solutions is no longer abstract. Every day, a patient moves from the waiting room to a chair outside the room with the CT scanner, sitting in the quiet interval between scans and reports.